Select Language:

On May 13, technology news outlet FastTech reported that the evolution of modern computing, which began with the ENIAC in 1946—the world’s first electronic computer—and was fundamentally shaped by John von Neumann’s stored-program architecture, could soon experience a seismic shift driven by artificial intelligence advancements. These developments threaten to redefine the very foundation of how computers operate, including operating systems.

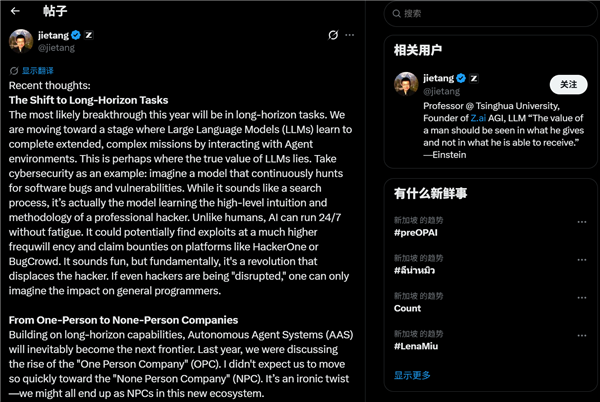

Tang Jie, Founder and Chief Scientist of Zhipu, shared his insights online about the burgeoning field of AI large models. He emphasized that this year might see breakthroughs in what he refers to as Long-Horizon Tasks—projects where AI agents need to operate continuously to accomplish complex goals.

He predicts that autonomous intelligent systems (AAS) will become the next pivotal frontier. While discussions last year centered around the rise of single-person companies, or OPC, the focus is shifting rapidly toward the emergence of companies with no employees (NPC). Achieving true AAS hinges on three core technological pillars: memory, lifelong learning, and self-judgment. For memory, solutions like extended context windows and retrieval-augmented generation (RAG) are promising.

Furthermore, shortening update cycles could serve as an indirect method to enable continuous learning. Both global and domestic AI models are seeing monthly upgrades, pushing the industry forward at an unprecedented pace. However, self-evaluation abilities of large models remain a significant challenge. Tang Jie pointed out that Anthropic’s Opus 4.7 has demonstrated early signs of self-correction and judgment capabilities.

He speculates that models like Claude might already have the ability to write their own code, clean data, generate synthetic datasets, and retrain themselves. Rumor has it that Anthropic plans to acquire around two million chips next year, likely dedicated solely to autonomous training tasks.

Tang insists that a baseline of around one million context tokens is essential and that memory and lifelong learning solutions could be achieved through challenging engineering feats. Currently, leveraging environmental control—known as harnessing environments—is viewed as a key breakthrough. Self-judgment represents a critical threshold, and achieving fully autonomous self-training could mark the ultimate evolution of large models.

During this transitional period, Tang emphasizes that every application must be rebuilt in an AI-native manner, potentially surpassing the traditional app concept altogether. The most profound challenge lies in redesigning the operating system itself. Instead of the conventional desktop interface, he envisions a future dominated by a large model-based OS, where applications are generated on demand.

Such a transformation would fundamentally challenge the long-standing von Neumann architecture—a system that has governed computing for nearly 80 years—and represents a revolutionary shift in the entire field of computer science.

Images accompanying the article depict Tang Jie and the revolutionary future of AI-driven computing, illustrating how these developments could dismantle and replace the traditional architecture with intelligent, adaptive systems.