Select Language:

A recent study conducted by researchers at Cornell University has raised concerns about the potential decline in the capabilities of large language models (LLMs) after prolonged exposure to low-quality internet content. The findings suggest that such exposure can cause a phenomenon akin to “brain rot,” where these AI systems experience notable reductions in comprehension, reasoning skills, and ethical consistency.

This research revives ongoing debates within the tech community around the so-called “Dead Internet Theory” — the idea that the internet is gradually losing its human touch, increasingly overtaken by machine-generated or subpar content that diminishes genuine creative input.

The study focused on prevalent AI models, including Meta’s Llama 3 and Alibaba Cloud’s Qwen 2.5. Researchers crafted datasets with varying ratios of high- and low-quality information to measure how exposure to inferior content impacts performance. Their results were striking: when models trained solely on low-quality data, their accuracy dropped dramatically from approximately 75% to 57%, and their ability to understand lengthy texts plummeted from 84% accuracy to just over 52%.

Moreover, the researchers observed a “dose-response” effect, indicating that the greater the exposure to poor-quality data, the more severely the models’ abilities degraded over time. These AI systems not only simplified or skipped reasoning processes but also produced more superficial responses. Additionally, their ethical consistency waned, leading to a phenomenon called “personality drift,” making them more prone to outputting inaccuracies or inappropriate content.

The implications of these findings have stirred concern among tech leaders and industry observers. Alexis Ohanian, co-founder of Reddit, recently voiced worries about the state of the internet, stating, “A large portion of what’s online now is essentially ‘dead’ — whether it’s AI-generated, semi-automated, or flooded with low-quality material.” He emphasized the importance of future platforms being able to verify human authenticity to preserve meaningful digital interactions.

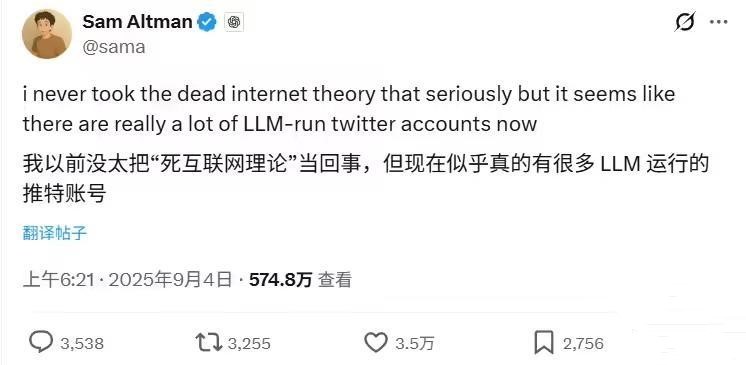

Similarly, OpenAI CEO Sam Altman echoed these sentiments, warning that the “Dead Internet Theory” is already unfolding before our eyes. He noted that many accounts on X (formerly Twitter) are now operated by AI, raising questions about the authenticity of online discourse.

The crisis over internet content quality is further compounded by data from Amazon Web Services, which reports that about 57% of current online content is either AI-generated or translated by machines. This trend is impacting the reliability of search results and online information overall.

Renowned tech figure Jack Dorsey also warned about the increasing difficulty in distinguishing real from fake content, as advances in deepfake technology and video synthesis make verification a significant challenge. He highlighted that individuals will need to rely more on personal experience and critical judgment to discern truth from fiction.

As AI-driven content continues to proliferate at a rapid pace, experts caution that if the cycle of low-quality information persists, the feared “dead internet” scenario may soon become a reality — a digital landscape where authenticity and human influence are increasingly diminished.